Haptic technology, which simulates the sense of touch through tactile feedback mechanisms, has been described as “doing for the sense of touch what computer graphics does for vision.” Haptics are already common in devices such as smartphones, where touch sensations such as clicks and vibrations enhance the user experience. When it comes to virtual reality, however, it’s far more challenging to translate tactile cues. Auditory and visual feedback are fairly easy, and applications can be controlled using keyboards, joysticks, steering wheels, or, in the case of Kinect, the human body.

But how can a user touch and feel objects inside the virtual world? Can a flat touchscreen convey depth, weight, movement, and shape? Yes, say scientists in the Natural Interaction Research group at Microsoft Research Redmond. Mike Sinclair, Michel Pahud, and Hrvoje Benko mounted a multitouch, stereo-vision 3-D monitor on a robot arm to study how the kinesthetic haptic sense, which relates to motion rather than tactile touch, can augment touchscreen interactions.

The result is Actuated 3-D Display with Haptic Feedback, a project that features a haptic device that provides 3-D physical simulation with force feedback. The system consists of a touchscreen with a robot arm, engineered for instant, sensitive responsiveness, smooth forward and backward movement, and applications that support multitouch screen interactions, force sensing, 3-D visualizations, and depth movement. By moving a finger on the screen, the user can interact with on-screen 3-D objects and experience different force responses that correspond to the physical simulation. Demonstrated in public for the first time during TechFest 2013, the project intrigued attendees, who lined up to try this unique, immersive experience.

Microsoft research podcast

Collaborators: Silica in space with Richard Black and Dexter Greene

College freshman Dexter Greene and Microsoft research manager Richard Black discuss how technology that stores data in glass is supporting students as they expand earlier efforts to communicate what it means to be human to extraterrestrials.

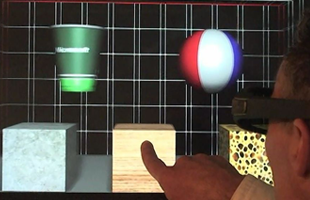

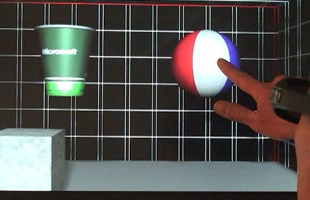

Objects that can be touched and manipulated with the Actuated 3-D Display.

One project application consisted of three virtual 3-D boxes, each with different virtual weights and friction forces corresponding to their supposed material: stone, wood, and sponge. Users could push with a finger on the screen into the virtual space until they encountered one of the boxes, and the device simulated the appropriate resistance through force feedback as the user pushed at each box. The force-feedback monitor responds to convey the sensation of different materials: The stone block “feels” hard to the touch and requires more force to push, while the sponge block is soft and easy to push.

The Power of One Dimension

“I had been interested in the notion of putting a robot behind something you could touch,” Sinclair says. “Originally, I had wanted a robot arm with many degrees of freedom. But complexity, costs, and safety issues narrowed down the options to one dimension of movement. At that point, I was sure that others must have already looked into this scenario, but after looking at the literature, it turned out no one had done this.”

It also turned out that being limited to a robot armature with one dimension of movement—the Z-axis of the applications—provided valuable insights into how much or how little data humans need to detect the shape and type of object being touched.

“Contour detection was a major component of the project,” Pahud says. “The question was, by using your normal haptic sense, could you actually identify the type of object you were touching, even though it’s being presented by a fairly crude device consisting of a 2-D touch screen and a robot arm that moves only in one dimension, forward and back along a track?”

To determine whether the device could simulate contours convincingly, an application presents the user with two rigid shapes of different depths, a cup and a ball. By changing the depth of the screen according to the user’s touch input, the team was able to simulate the surface contour of the 3-D object. In contrast to the force-feedback behavior, this mode of operation can be thought of as setting the screen position with infinite resistance so that the user “feels” the contour of the 3-D object by tracing a finger along its surface.

The display moves in depth based on the user’s finger position against a 3-D object.

“Your finger is always aware of motion,” Pahud explains. “As your finger pushes on the touchscreen and the senses merge with stereo vision, if we do the convergence correctly and update the visuals constantly so that they correspond to your finger’s depth perception, this is enough for your brain to accept the virtual world as real.”

Taking this experiment a step further, the team “blindfolded” subjects by making the screen blank. The goal was to see whether users could identify shapes by touch alone.

“They couldn’t see anything, and the shapes were simple objects,” Sinclair says. “We knew beforehand that complicated objects wouldn’t work, but some of the objects were reasonably sophisticated—a pyramid, a wedge, a cylinder. But I’d say these results were the biggest and most pleasant surprise of the project.”

Pahud agrees.

“I was impressed with how many people got the shapes,” he says. “There were even some subjects who were 100 percent correct. That was definitely a surprise.”

The project proved that with just a low-bandwidth data channel—the finger—it is possible to model surface contours. The low bandwidth is simply a function of the fact that, with a finger, the user only touches one point at a time, yet with sufficient haptic feedback as the finger moves, there is enough information to identify shapes.

Idle Forces at Work

One feature of the Actuated 3-D Display with Haptic Feedback project that users might not notice but was essential to its function was implementation of a constant idle force.

“It’s a very lightweight force that pushes back to follow the finger and maintain constant contact,” Sinclair explains. “At first, it feels as though you are touching a hard wall that’s easy to push, but you get used to it very quickly because it supplies only a few ounces of force against the finger. Since touchscreen interactions require the user’s finger to remain in contact with the surface, the main challenge of the idle mode is to ensure that the screen remains in contact with the fingertip regardless of the direction that the fingertip is moving, either away or toward the user.”

Thanks to this small idle force, the screen can follow the user’s finger in depth excursions, both positive and negative, until a haptic force beyond the idle force is commanded, such as when touching and interacting with an object.

The team also implemented four additional command modes: force, velocity, position, and detent, where additional forces are added to the idle force depending on the position of the finger and the application requirements. Detent mode, for example, specifies a fixed-position command to the controller, which makes the screen remain exactly at a desired position, effectively canceling the idle force. This adds a brief resistance that creates a haptic signal for the user, analogous to the sensory click of a radio dial when turned off. The detent mode proved extremely useful for exploring volumetric data.

Exploring Data Through Touch

From the first discussion about this work, Pahud envisioned a brain—or, rather, a 3-D image of a brain, built from volumetric data.

“I could see an image of the front of a brain,” he says, “and pushing a finger through the layers of the brain to travel through the data. I could imagine receiving haptic feedback when you encountered an anomaly, such as a tumor, because we can change the haptic response based on what you touch. You could have different responses for when you touch soft tissue versus hard tissue, which makes for a very rich experience.”

In contrast to other project applications that apply to 3-D scenes, the volumetric-data-exploration application shows how movement and haptics can enhance interactions with 2-D data. Pahud implemented a volumetric medical-image browser that shows the MRI-scanned data of a human brain. By gently pushing on the screen, the user can explore the data using touch and view different image slices of the brain.

The brain browser is not just for exploring data through touch. The prototype is meant to demonstrate how touch interactions can be deployed for meaningful work. For example, if the user is interested in a particular slice and wants to return to that spot later, touching an on-screen button with a non-pointing finger along the left or right side of the screen locks the screen position in place. With the screen locked, the user can use a fingertip to annotate the slice by drawing on the image or adding notes on the side.

The interface provides a method to mark and save depth investigations of interest.

To facilitate search and retrieval of annotated slices, the project implements a haptic detent to mark that slice. Thus, when the user’s finger moves past an annotated slice, either coming or going, the detent provides tactile resistance to alert the user of an annotated slice. The user may continue on and push past the detent, but the detent has done its job by making it easier to find annotations through touch, unaided by visuals.

There is no doubt that the kinesthetic approach creates a productive new paradigm that augments touchscreen interactions. Pahud and Sinclair see many opportunities for this type of haptic device.

“There’s always 3-D gaming,” Sinclair says, “but also 3-D modeling, education, and medical. We anticipate improving the experience with crisper, more detailed feedback, such as texture.