By Rob Knies, Managing Editor, Microsoft Research

Multicore and many-core processors represent the future of computing.

Concerns with power consumption and heat management have limited the ability of chip manufacturers to continue to provide more processing power via faster clock speeds. Consequently, to deliver the ever-increasing performance to which we’ve all grown accustomed, processor makers have turned to developing chips with multiple cores to gain efficiency.

Spotlight: Microsoft research newsletter

The problem is that current operating systems were not designed to support computers with large numbers of processing cores. Efforts are afoot to make existing operating systems work well on existing hardware, but such attempts are incremental at best, and within five or 10 years, we’ll need a new paradigm.

That’s what researchers from Microsoft Research and the Systems Group (opens in new tab) in the Department of Computer Science at ETH Zurich, the Swiss Federal Institute of Technology, are attempting to define.

They have developed a new operating system for research, called Barrelfish (opens in new tab), as a project to determine how best to negotiate this new era of multicore processing.

Work began on Barrelfish in October 2007, starting from scratch, so the researchers involved could explore the implications of multicore computing in detail without the need to worry about existing code in current computer operating systems.

Paul Barham

“I see this as a way to explore fairly radical changes to the way people use computers,” says Paul Barham, a principal researcher. “As an operating systems researcher, if you’re starting from an existing operating system and modifying it, there are so many constraints that it’s really difficult to try radical ideas.

“These are the kinds of things that it’s much easier to play with on a clean slate.”

Fellow researcher Rebecca Isaacs—who, like Barham, has moved from Microsoft Research Cambridge (opens in new tab) to Microsoft Research Silicon Valley as the project has evolved—says Barrelfish represents an opportunity to get ahead of the hardware curve.

“Multicore is the only type of machine that we’re going to be buying soon,” she says. “You’re going to be getting more and more cores on your desktop. We have to rethink how we structure operating systems to deal with this kind of hardware.”

One thing the project does not represent, however, is a new Microsoft operating system. Barrelfish is intended as a proof of concept to establish a research foundation for exploration of this new, multicore hardware.

“We’re certainly not trying,” Barham states, “to come up with a replacement for Windows.”

Rebecca Isaacs

Adds Isaacs: “What we are doing is structuring the software in a radically different way that will be more appropriate for the kinds of machines that we see emerging over the next five to 10 years.”

In recent years, computer hardware has diversified at an increasing rate, more so than system software. Consequently, it is no longer realistic to fine-tune an operating system for a particular hardware model; the models in deployment vary wildly, and optimizations become obsolete quickly as the hardware evolves.

Barham and longtime collaborator Timothy Roscoe (opens in new tab) from ETH Zurich have been discussing operating systems for more than a decade, since they were Ph.D. students developing the Nemesis (opens in new tab) research operating system at the University of Cambridge.

“A few years ago,” Barham recalls, “we were on a train, discussing why there were still so many things that really haven’t changed much with operating systems. We wanted an experimental platform to let us play with new directions for operating systems.

“That’s where the name Barrelfish came from. We could think of so many ideas that it would be like shooting fish in a barrel. Mothy used to work for Intel Research, where he gained insights into how processors were evolving across the industry, inspiring him to consider how operating systems would need to change to make the most of these evolutions. We could not see how current operating systems would be able to run on different types of hardware without having to rewrite them from scratch.”

It helped that Roscoe, now a computer science professor at ETH Zurich, which has a significant focus on technological research, had Ph.D. students of his own who were eager to work on such a project.

Timothy Roscoe

“It seemed to us, and many others, that the hard challenges for future hardware, whether in a home, office, or data center, were really networking problems,” Roscoe recalls. “We were throwing all sorts of ideas around about how to program and manage collections of machines in new ways. But as we talked, it became clear that in the future, even within a single machine or a single OS, you would be looking at a networking problem, and that is really different from the way that current operating systems are designed.”

As it turns out, Barham notes, many compelling research scenarios followed.

“Initially, we wanted to look at how, if you have more than one computer in your house or office, you could use all of those machines together as a single system,” he says. “That’s where I thought we would be doing research. But the way hardware’s evolving, there are lots of interesting problems just designing an OS that was future-proof and could run on all the types of machines people are proposing. Dealing with that has occupied us for most of the early years of the project, and we haven’t gotten around to shooting many fish yet.”

The main challenges for the Barrelfish researchers are scalability, expected to become increasingly difficult as the number of cores per chip increases, and processor and system heterogeneity.

“In the next five to 10 years,” Barham predicts, “there are going to be many varieties of multicore machines. There are going to be a small number of each type of machine, and you won’t be able to afford to spend two years rewriting an operating system to work on each new machine that comes out. Trying to write the OS so it can be installed on a completely new computer it’s never seen before, measure things, and think about the best way to optimize itself on this computer—that’s quite a different approach to making an operating system for a single, specific multiprocessor.”

The problem, the researchers say, stems from the use of a shared-memory kernel with data structures protected by locks. The Barrelfish project opts instead for a distributed system in which each unit communicates explicitly.

Different Processors, Different Speeds

“If you look inside a modern computer,” Barham says, “you have a number of potentially different processors running at different speeds, and there’s a complicated interconnect between them, potentially lots of caches. This causes the insides of the single computer to behave as though it were a bunch of computers on a network.

“Historically, when you get many computers on a network, you use distributed-systems techniques to deal with high communication latencies. There is a lot of theory analyzing how to make distributed systems work well. We’re trying to take all of these good results from the last 15, 20 years and retarget them within a single computer, rather than using them on a bunch of machines on a network.”

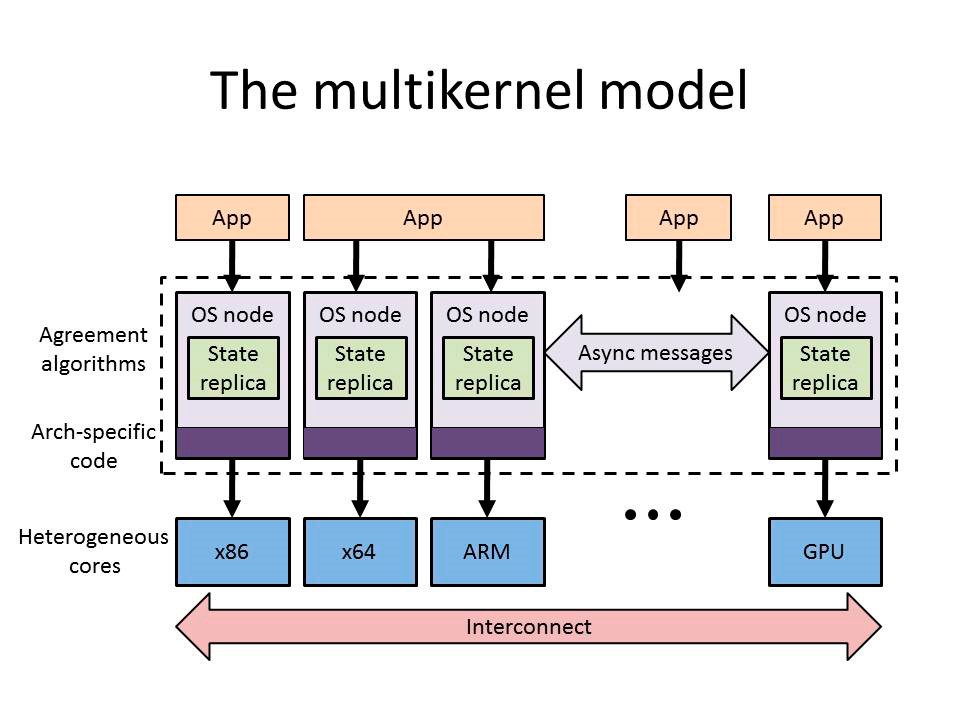

This constitutes a radically different operating system structure, one based on the concept of a “multikernel.”

“We’re thinking abstractly about the issues we want to address,” Isaacs says. “Can we come up with a reference model for how we think an operating system ought to be structured? That’s what the multikernel is. It’s a model that describes the concept of structuring the operating system as a distributed system. We have the operating system state replicated on every node, and the operating system instances running on each core send messages to each other. Barrelfish is one implementation of the multikernel model.”

The project, as described in the paper The Multikernel: A new OS architecture for scalable multicore systems (opens in new tab), delivered in October 2009 during the Association for Computing Machinery’s 22nd Symposium on Operating Systems Principles, features three design principles:

- All intercore communications are made explicit.

- The operating system structure is independent of any particular hardware-performance characteristics.

- The state is viewed as replicated, instead of shared.

“In a normal multiprocessor operating system,” Barham explains, “all the processors would manipulate the same kernel-based structures using shared memory. Because all of these processors have caches, you end up with lots of little parts of the kernel data structures in the caches of each processor. Even though each core thinks it’s manipulating the same data structure, what’s actually happening is the hardware has to keep lots of copies of your operating system data structures consistent.

“The cache-coherence protocols are getting extremely difficult to scale up to large numbers of processors. The operating system writer must think hard about which bits of the kernel state get copied between which processors when an operation is performed. When an update is made, which other processors need to know about it? Which data need to go to all the other cores? The idea of the multikernel is to make all those communication patterns explicit, so that rather than having to second-guess which cache lines get dragged around inside the machine, you run a separate kernel on each processor, and when you make a change, you send an explicit message describing the change to all the other cores. That is much more like a distributed system than a shared-memory program with threads.”

Most software applications assume the existence of shared memory that’s cache-coherent. Processor architects, though, are beginning to consider dispensing with cache-coherent memory, because, without it, multicore chips can operate much faster.

Programmable Cache Coherence

“What seems likely,” Barham adds, “is that, with machines with hundreds of cores, if they do have cache coherence, it won’t be between all of the processors. It will be programmable in some way. We’ve got to think of a way for the operating system to expose that to applications in a useful fashion.”

Barrelfish employs a technique called a “system knowledge base.” When an operating system boots, it probes the hardware to measure the performance of all the parts of the system to see how quickly they can communicate between processors. That information is recorded in a small database, and the operating system will run a small program that optimizes this communication: the best protocols to use, the best algorithms to keep all the system’s data structures consistent and efficient. The resultant performance is comparable to that of a conventional operating system.

There are some academic projects, the researchers note, that address specific problems to help existing operating systems cope with multicore processors, but none approach the scope of the Barrelfish effort.

“This project,” Barham says, “is quite ambitious—sprawling, actually.”

And there’s more to come. The researchers also are examining other components based on the Barrelfish foundation, such as an asynchronous programming model and a parallel file system.

Tim Harris

On such a substantial project, there is room for many contributors. Among those who have played a key role on Barrelfish is Tim Harris, a researcher from Microsoft Research Cambridge who is developing extensions to the C programming language to help programmers with the notoriously challenging asynchronous communication used throughout Barrelfish. Harris also has organized funding for external groups to work on Barrelfish.

Andrew Baumann (opens in new tab) acted as architectural lead while a post-doctoral researcher at ETH Zurich and did much of the low-level implementation, along with Ph.D. students Simon Peter and Adrian Schüpbach, the latter of whom also designed and implemented Barrelfish’s system knowledge base. Baumann’s impressive work on the Barrelfish project led directly to his hiring by Microsoft Research Redmond (opens in new tab).

At ETH Zurich, Pravin Shinde and Stefan Kästle are using Barrelfish as the basis of their Ph.D. research, while Orion Hodson and Richard Black (opens in new tab) from Microsoft Research Cambridge have ported Barrelfish to interesting new processor architectures. And Vijayan Prabhakaran, a researcher from Microsoft Research Silicon Valley, is exploring a file system for the operating system.

In the summer of 2010, Barham and Isaacs relocated from Microsoft Research Cambridge to Microsoft Research Silicon Valley to avail themselves of that facility’s focus on distributed computing.

“I did an internship with Mike Schroeder [assistant managing director of Microsoft Research Silicon Valley] back in 1995,” Barham says. “He’s been trying to convince me to work for him for the last 15 years. I finally gave in.”

Prized Partners

In California, Barham and Isaacs have encountered a wealth of potential new partners—including Chuck Thacker, a Microsoft technical fellow who was named winner of the 2009 A.M. Turing Award, commonly known as the Nobel Prize of computing.

“One of the platforms we’re porting Barrelfish to,” Barham says, “is the Beehive platform and processor designed by Chuck Thacker. He’s one of the processor architects whose opinion we solicited when trying to work out what hardware should look like. He believes that cache coherence will not be around forever.

“And,” Barham adds, “working with a Turing Award winner has its appeals.”

The project has continued to progress since the Microsoft researchers’ move to California. A new collaborative research agreement with ETH Zurich was signed in late February. A new version of Barrelfish recently was released, adding support for Intel’s Single Chip Cloud computer and Beehive, both experimental, many-core research platforms with hardware-messaging support and no cache coherence.

In addition, Microsoft Research Connections (opens in new tab) has provided grants to develop a research community around Barrelfish, one that would extend beyond the initial participants from Microsoft Research and ETH Zurich. Support for that community reflects growing interest by the broader research ecosystem in using Barrelfish.

For example, in Kista, Sweden, Mats Brorsson (opens in new tab), a professor at the KTH School of Information and Communications Technology, is exploring parallel programming with Barrelfish, working with Georgios Varisteas, a Microsoft-funded Ph.D. candidate. And at the BSC-Microsoft Research Centre (opens in new tab), Vicenç Beltran (opens in new tab) and Zeus Gómez Marmolejo are working on StarSs, a system in which they are applying ideas from the design of superscalar microprocessors to the design of parallel software.

Andrew Baumann

Meanwhile, the researchers remain focused on making Barrelfish more usable and robust, continuing their research and publishing papers about their work, and encouraging more users from research institutions to take a look. The source code has been released publically under the Open Source MIT License for use in the academic research community.

“We’re starting to believe we understand the low-level parts of the operating system,” Barham says. “Now, we’re trying to think more up the software stack. When end users want to write programs on this operating system, what does that look like? How should application code interact with the operating system? That’s probably where we’re going next.

“It’s becoming increasingly common that people are writing parallel programs. In a few years’ time, most people’s desktop machines will have large numbers of programs that all want to make use of multiple processors, large numbers of cores. When you have more than one parallel program running on your machine, they all expect to get all of the CPUs, and the operating system is going to have to share out the cores in a fair way. Nobody’s started to deal with this yet, and we think it has consequences for the way applications and language runtimes need to be written.”

But as their explorations continue, Barham and Isaacs remain realistic about what they expect to achieve in the short term. The latter has some tangible results in sight.

“Real-world operating systems people—are they going to learn anything from what we publish?” she asks. “That would be quite a success, if the Windows guys read our papers and say, ‘Ah, that’s interesting.’ And likewise, the processor architects, as well. Intel’s people are quite interested in what’s happening with Barrelfish.

“The third strand is stimulating academics to start doing serious research in operating systems again. It’s a sort of hearts-and-minds approach, rather than anything concrete.”

Barham takes a more philosophical stance. He understands that it will take time for the research he is pursuing to bear fruit.

“As an OS researcher,” he says, “when you start working on things like this, you rarely expect the world to change immediately.”