Sparse Stochastic Bandits

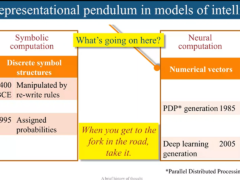

Many learning problems, such as choosing which ad to put on a website, or what movie to recommend to a user, can be cast as stochastic bandit problems. The key here is that in these problems learning and decision making are interleaved: The actions chosen influence the information received and hence the learning strategy must carefully balance between choosing actions to collect information or to accumulate reward. Today’s bandit problems involve tens of thousands or even more actions. A popular approach to scaling up bandit problems to work with large action spaces is to assume that the expected payoff can be closely approximated as a linear map from the features of actions. Using more features helps one to improve this approximation, but when the number of features is also large (maybe also tens of thousands), learning will be slow. A popular assumption then is that the true unknown parameter vector is sparse. In supervised learning, such an assumption is known to help to increase the learning speed. Can sparsity of the parameter vector be exploited in bandit problems? How can one design algorithms that take advantage of parameter sparsity? In this talk we will show an approach, which is based on two reductions between different learning problems, that helps one to answer these questions (in particular, answers the first question positively). The first reduction concerns reducing the sparse stochastic bandit problem to designing (tight) confidence sets for sparse linear regression (and is more or less standard), while the second one concerns reducing the problem of designing confidence sets for sparse linear regression to designing low-regret algorithms for linear prediction under the squared loss in an online, *adversarial* framework. We demonstrate the effectiveness of the approach on some numerical examples. The talk is based on the paper Abbasi-Yadkori, Y., Pál, D., and Szepesvári, Cs., Online-to-confidence-set conversions and application to sparse stochastic bandits, AISTAT, pp. 1—9, 2012. http://www.ualberta.ca/~szepesva/papers/online-to-confidenceset.pdf with some new ideas, time permitting. Only general knowledge of machine learning is assumed.

Speaker Details

Csaba Szepesvari (PhD’99) is currently a Professor at the the Department of Computing Science of the University of Alberta and a Principal Investigator of the Alberta Innovates Center for Machine Learning, before which he was a senior researcher of the Computer and Automation Research Institute of the Hungarian Academy of sciences and held various industrial positions. The coauthor of a book on nonlinear approximate adaptive controllers and the author of a short book on Reinforcement Learning, he published about 150 journal and conference papers. He is best known for the UCT algorithm, which led to a leap in the performance of planning and search algorithms in many domains, in particular in computer go. He is an Action Editor of the Journal of Machine Learning Research and the Machine Learning Journal. His research interests include reinforcement learning, online learning and statistical learning theory.

- Series:

- Microsoft Research Talks

- Date:

- Speakers:

- Csaba Szepesvari

-

-

Jeff Running

-

Series: Microsoft Research Talks

-

-

-

-

Galea: The Bridge Between Mixed Reality and Neurotechnology

Speakers:- Eva Esteban,

- Conor Russomanno

-

Current and Future Application of BCIs

Speakers:- Christoph Guger

-

Challenges in Evolving a Successful Database Product (SQL Server) to a Cloud Service (SQL Azure)

Speakers:- Hanuma Kodavalla,

- Phil Bernstein

-

Improving text prediction accuracy using neurophysiology

Speakers:- Sophia Mehdizadeh

-

-

DIABLo: a Deep Individual-Agnostic Binaural Localizer

Speakers:- Shoken Kaneko

-

-

Recent Efforts Towards Efficient And Scalable Neural Waveform Coding

Speakers:- Kai Zhen

-

-

Audio-based Toxic Language Detection

Speakers:- Midia Yousefi

-

-

From SqueezeNet to SqueezeBERT: Developing Efficient Deep Neural Networks

Speakers:- Sujeeth Bharadwaj

-

Hope Speech and Help Speech: Surfacing Positivity Amidst Hate

Speakers:- Monojit Choudhury

-

-

-

-

-

'F' to 'A' on the N.Y. Regents Science Exams: An Overview of the Aristo Project

Speakers:- Peter Clark

-

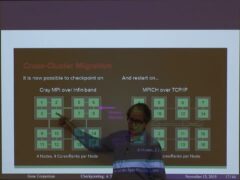

Checkpointing the Un-checkpointable: the Split-Process Approach for MPI and Formal Verification

Speakers:- Gene Cooperman

-

Learning Structured Models for Safe Robot Control

Speakers:- Ashish Kapoor

-

-