Using FPGAs to Simulate Novel Datacenter Network Architecture at Scale

The tremendous success of Internet services has led to the rapid growth of Warehouse-Scale Computers (WSCs). The networking infrastructure has become one of the most vital components in a datacenter. With the rapid evolving set of workloads and software, evaluating network designs really requires simulating a computer system with three key features. To avoid the high capital cost of hardware prototyping, many designs have only been evaluated with a very small testbed built with off-the-shelf devices, often running unrealistic microbenchmarks or traces collected from an old cluster. Many evaluations assume the workload is static and that computations are only loosely coupled with the very adaptive networking stack. We argue the research community is facing a hardware-software co-evaluation crisis.

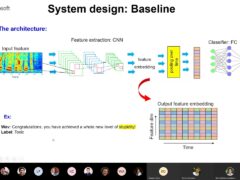

In this talk, we present a novel cost-efficient evaluation methodology, called Datacenter-in-a-Box at Low cost (DIABLO), which uses Field-Programmable Gate Arrays (FPGAs) and treats datacenters as whole computers with tightly integrated hardware and software. Instead of prototyping everything in FPGAs, we build realistic reconfigurable abstracted performance models at scales of O(10,000) servers. Our server model runs the full Linux operating system and open-source datacenter software stack, including production software such as memcached. It achieves two orders of magnitude simulation speedup over software-based simulators. This speedup enables us to run the full datacenter software stack for O(100) seconds of simulated time. We have built a DIABLO prototype of a 2,000-node simulated cluster with runtime-configurable 10 Gbps interconnect using 6 multi-FPGA BEE3 boards. Using DIABLO simulation, we have successfully reproduced a few datacenter phenomenon, such as TCP incast and request latency long tail at large scales.

Speaker Details

Zhangxi Tan is a PhD candidate in the Computer Science Division of UC Berkeley. He is working on the Research Accelerator for Multiple Processors (RAMP) project in the Parallel Computing Lab with Prof. David Patterson and Prof. Krste Asanovic. His current project is hardware and software co-simulation of novel datacenter network architectures using FPGAs.

- Series:

- Microsoft Research Talks

- Date:

- Speakers:

- Zhangxi Tan

- Affiliation:

- UC Berkeley

-

-

Jeff Running

-

Series: Microsoft Research Talks

-

-

-

-

Galea: The Bridge Between Mixed Reality and Neurotechnology

Speakers:- Eva Esteban,

- Conor Russomanno

-

Current and Future Application of BCIs

Speakers:- Christoph Guger

-

Challenges in Evolving a Successful Database Product (SQL Server) to a Cloud Service (SQL Azure)

Speakers:- Hanuma Kodavalla,

- Phil Bernstein

-

Improving text prediction accuracy using neurophysiology

Speakers:- Sophia Mehdizadeh

-

-

DIABLo: a Deep Individual-Agnostic Binaural Localizer

Speakers:- Shoken Kaneko

-

-

Recent Efforts Towards Efficient And Scalable Neural Waveform Coding

Speakers:- Kai Zhen

-

-

Audio-based Toxic Language Detection

Speakers:- Midia Yousefi

-

-

From SqueezeNet to SqueezeBERT: Developing Efficient Deep Neural Networks

Speakers:- Sujeeth Bharadwaj

-

Hope Speech and Help Speech: Surfacing Positivity Amidst Hate

Speakers:- Monojit Choudhury

-

-

-

-

-

'F' to 'A' on the N.Y. Regents Science Exams: An Overview of the Aristo Project

Speakers:- Peter Clark

-

Checkpointing the Un-checkpointable: the Split-Process Approach for MPI and Formal Verification

Speakers:- Gene Cooperman

-

Learning Structured Models for Safe Robot Control

Speakers:- Ashish Kapoor

-

-