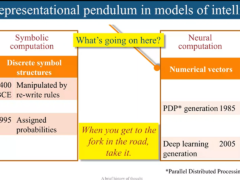

Deep Learning of Representations

The success of machine learning algorithms generally depends on data representation, and we hypothesize that this is because different representations can entangle and hide more or less the different explanatory factors of variation behind the data. Although specific domain knowledge can be used to help design representations, learning with generic priors can also be used, and the quest for AI is motivating the design of more powerful representation-learning algorithms implementing such priors. This talk reviews recent work in the area of unsupervised feature learning and deep learning, focusing on advances in understanding the probabilistic and geometric (manifold) aspects of regularized auto-encoders. This motivates longer-term unanswered questions about the appropriate objectives for learning good representations, for computing representations (i.e., inference), and the geometrical connections between representation learning, density estimation and manifold learning. Finally, the talk will briefly discuss the important question of why training deep or recurrent networks is difficult (and important to scale them towards AI) and recent advances in this regard.

Speaker Details

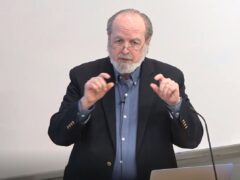

Yoshua Bengio received a PhD in Computer Science from McGill University, Canada in 1991. After two post-doctoral years, one at M.I.T. with Michael Jordan and one at AT&T Bell Laboratories with Yann LeCun and Vladimir Vapnik, he became professor at the Department of Computer Science and Operations Research at Université de Montréal. He is the author of two books and around 200 publications, the most cited being in the areas of deep learning, recurrent neural networks, probabilistic learning algorithms, natural language processing and manifold learning. He is among the most cited Canadian computer scientists and is or has been associate editor of the top journals in machine learning and neural networks. Since ‘2000 he holds a Canada Research Chair in Statistical Learning Algorithms, since ‘2006 an NSERC Industrial Chair, since ‘2005 his is a Fellow of the Canadian Institute for Advanced Research. He is on the board of the NIPS foundation and has been program chair and general chair for NIPS. He has co-organized the Learning Workshop for 14 years and co-created the new International Conference on Learning Representations. His current interests are centered around his quest for AI through machine learning, and include fundamental questions on deep learning and representation learning, the geometry of generalization in high-dimensional spaces, manifold learning, biologically inspired learning algorithms, and challenging applications of statistical machine learning. At the beginning of 2013, Google Scholar finds more than 12000 citations to his work, yielding an h-index of 47.

- Series:

- Microsoft Research Talks

- Date:

- Speakers:

- Yoshua Bengio

- Affiliation:

- University of Montreal

-

-

Jeff Running

-

Series: Microsoft Research Talks

-

-

-

-

Galea: The Bridge Between Mixed Reality and Neurotechnology

Speakers:- Eva Esteban,

- Conor Russomanno

-

Current and Future Application of BCIs

Speakers:- Christoph Guger

-

Challenges in Evolving a Successful Database Product (SQL Server) to a Cloud Service (SQL Azure)

Speakers:- Hanuma Kodavalla,

- Phil Bernstein

-

Improving text prediction accuracy using neurophysiology

Speakers:- Sophia Mehdizadeh

-

-

DIABLo: a Deep Individual-Agnostic Binaural Localizer

Speakers:- Shoken Kaneko

-

-

Recent Efforts Towards Efficient And Scalable Neural Waveform Coding

Speakers:- Kai Zhen

-

-

Audio-based Toxic Language Detection

Speakers:- Midia Yousefi

-

-

From SqueezeNet to SqueezeBERT: Developing Efficient Deep Neural Networks

Speakers:- Sujeeth Bharadwaj

-

Hope Speech and Help Speech: Surfacing Positivity Amidst Hate

Speakers:- Monojit Choudhury

-

-

-

-

-

'F' to 'A' on the N.Y. Regents Science Exams: An Overview of the Aristo Project

Speakers:- Peter Clark

-

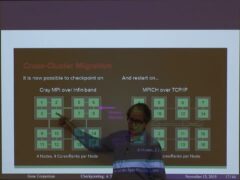

Checkpointing the Un-checkpointable: the Split-Process Approach for MPI and Formal Verification

Speakers:- Gene Cooperman

-

Learning Structured Models for Safe Robot Control

Speakers:- Ashish Kapoor

-

-