Realtime Facial Animation

Recent advances in realtime performance capture have brought within reach a new form of human communication. Capturing dynamic facial expressions of a user and retargeting these expressions to a digital character in realtime allows enacting arbitrary virtual avatars with live feedback. Compared to communication via recorded video streams that only offer limited ability to alter one’s appearance, such technology opens the door to fascinating new applications in computer gaming, social networks, television, training, customer support, or other forms of online interactions.

In this talk, I will present a new algorithm for realtime face tracking on commodity RGB-D sensing devices, e.g. Kinect. Our method requires no user-specific training or calibration, or any other form of manual assistance, thus enabling a range of new applications in performance-based facial animation and virtual interaction at the consumer level. Compelling 3D facial dynamics can be reconstructed in realtime without the use of face markers, intrusive lighting, or complex scanning hardware.

Speaker Details

Sofien is a 3rd year PhD student in the Computer Graphics and Geometry Laboratory at the Ecole Polytechnique Federale de Lausanne (EPFL) under the supervision of Prof. Mark Pauly. He received his MSc degree in Computer Science from EPFL in 2009 and completed his master thesis at the Imaging Group of Mitsubishi Electric Research Laboratories (MERL). His research interests include computer graphics, computer vision, and machine learning. Sofien is the co-founder of the startup faceshift AG, an EPFL spin-off which brings high-quality markerless facial motion capture to the consumer market. He is co-developing the facial motion capture software faceshift studio.

http://lgg.epfl.ch/~bouaziz/

http://www.faceshift.com

- Series:

- Microsoft Research Talks

- Date:

- Speakers:

- Sofien Bouaziz

- Affiliation:

- EPFL

-

-

Jeff Running

-

Series: Microsoft Research Talks

-

-

-

-

Galea: The Bridge Between Mixed Reality and Neurotechnology

Speakers:- Eva Esteban,

- Conor Russomanno

-

Current and Future Application of BCIs

Speakers:- Christoph Guger

-

Challenges in Evolving a Successful Database Product (SQL Server) to a Cloud Service (SQL Azure)

Speakers:- Hanuma Kodavalla,

- Phil Bernstein

-

Improving text prediction accuracy using neurophysiology

Speakers:- Sophia Mehdizadeh

-

-

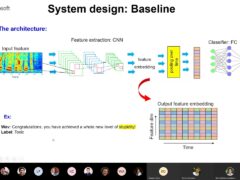

DIABLo: a Deep Individual-Agnostic Binaural Localizer

Speakers:- Shoken Kaneko

-

-

Recent Efforts Towards Efficient And Scalable Neural Waveform Coding

Speakers:- Kai Zhen

-

-

Audio-based Toxic Language Detection

Speakers:- Midia Yousefi

-

-

From SqueezeNet to SqueezeBERT: Developing Efficient Deep Neural Networks

Speakers:- Sujeeth Bharadwaj

-

Hope Speech and Help Speech: Surfacing Positivity Amidst Hate

Speakers:- Monojit Choudhury

-

-

-

-

-

'F' to 'A' on the N.Y. Regents Science Exams: An Overview of the Aristo Project

Speakers:- Peter Clark

-

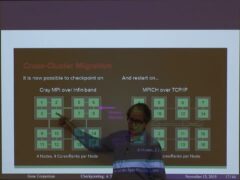

Checkpointing the Un-checkpointable: the Split-Process Approach for MPI and Formal Verification

Speakers:- Gene Cooperman

-

Learning Structured Models for Safe Robot Control

Speakers:- Ashish Kapoor

-

-