Learning Mixtures of Arbitrary Distributions over Large Discrete Domains

We give an algorithm for learning a mixture of unstructured distributions. This problem arises in various unsupervised learning scenarios, for example in learning topic models from a corpus of documents spanning several topics. We show how to learn the constituents of a mixture of k arbitrary distributions over a large discrete domain [n]=1,2,…,n} and the mixture weights, using O(n polylog n) samples. (In the topic-model learning setting, the mixture constituents correspond to the topic distributions.)

This task is information-theoretically impossible for k1 under the usual sampling process from a mixture distribution. However, there are situations (such as the above-mentioned topic model case) in which each sample point consists of several observations from the same mixture constituent. This number of observations, which we call the “sampling aperture”, is a crucial parameter of the problem.

We obtain the first bounds for this mixture-learning problem without imposing any assumptions on the mixture constituents. We show that efficient learning is possible exactly at the information-theoretically least-possible aperture of 2k-1. Thus, we achieve near-optimal dependence on n and optimal aperture. While the sample-size required by our algorithm depends exponentially on k, we prove that such a dependence is unavoidable when one considers general mixtures.

A sequence of tools contribute to the algorithm, such as concentration results for random matrices, dimension reduction, moment estimations, and sensitivity analysis.

Joint work with Leonard Schulman and Chaitanya Swamy.

Speaker Details

Yuval Rabani is a professor of Computer Science and Engineering at the Hebrew University of Jerusalem. Yuval’s research interests are in approximation algorithms, online algorithms and more broadly in the theory of combinatorial algorithms and discrete optimization. He is fascinated by the design and analysis of algorithms for several reasons: it’s an attempt to explore the limits of efficient computation, it’s a means to study the intrinsic properties of fundamental combinatorial problems, and there’s always some hope to find efficient solutions that might be useful in practice.

- Series:

- Microsoft Research Talks

- Date:

- Speakers:

- Yuval Rabani

- Affiliation:

- The Hebrew University of Jerusalem

-

-

Jeff Running

-

Series: Microsoft Research Talks

-

-

-

-

Galea: The Bridge Between Mixed Reality and Neurotechnology

Speakers:- Eva Esteban,

- Conor Russomanno

-

Current and Future Application of BCIs

Speakers:- Christoph Guger

-

Challenges in Evolving a Successful Database Product (SQL Server) to a Cloud Service (SQL Azure)

Speakers:- Hanuma Kodavalla,

- Phil Bernstein

-

Improving text prediction accuracy using neurophysiology

Speakers:- Sophia Mehdizadeh

-

-

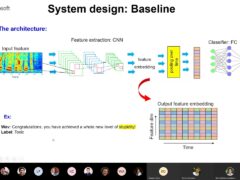

DIABLo: a Deep Individual-Agnostic Binaural Localizer

Speakers:- Shoken Kaneko

-

-

Recent Efforts Towards Efficient And Scalable Neural Waveform Coding

Speakers:- Kai Zhen

-

-

Audio-based Toxic Language Detection

Speakers:- Midia Yousefi

-

-

From SqueezeNet to SqueezeBERT: Developing Efficient Deep Neural Networks

Speakers:- Sujeeth Bharadwaj

-

Hope Speech and Help Speech: Surfacing Positivity Amidst Hate

Speakers:- Monojit Choudhury

-

-

-

-

-

'F' to 'A' on the N.Y. Regents Science Exams: An Overview of the Aristo Project

Speakers:- Peter Clark

-

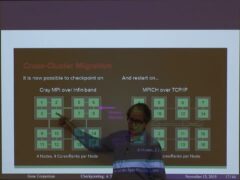

Checkpointing the Un-checkpointable: the Split-Process Approach for MPI and Formal Verification

Speakers:- Gene Cooperman

-

Learning Structured Models for Safe Robot Control

Speakers:- Ashish Kapoor

-

-